Salesforce keeps getting more capable. Better automation. More intelligence layered into everyday workflows. More expectations from leadership and end users alike.

But even with all of that progress, one thing hasn’t changed. Salesforce reflects what it’s given. When data is incomplete, inconsistent, or duplicated, the system can only work within those constraints.

That’s why data quality continues to be one of the most important factors behind adoption, reporting confidence, and long-term value from Salesforce.

A simple question that surfaces a common gap

During a recent Hunley webinar, we asked attendees how they would rate their Salesforce duplication rate.

The most common response wasn’t a number. It was uncertainty.

That’s not a failure. It’s common. Duplicate data builds gradually through imports, integrations, manual entry, and changing business processes. Over time, it becomes harder to spot without the right visibility.

As a general reference point: Accounts are healthiest in the low single digits

Once duplicate rates climb higher, teams start feeling it in subtle ways. Reports require extra explanation. Sales reps hesitate over which record to use. Leadership asks why numbers don’t line up.

Why data quality has become more important, not less

As Salesforce expands its use of AI, data quality carries more weight. AI depends on context stored in your org. Relationships between accounts. Activity history. Contact details. Field consistency.

When that context is fragmented across multiple records or formatted inconsistently, AI doesn’t fail loudly. It fills in gaps quietly. That’s where confidence can slip.

Gaining visibility without slowing everything down

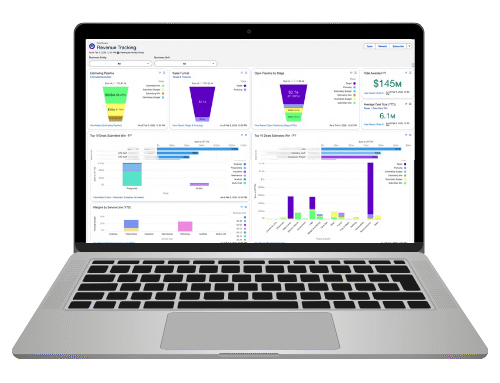

One of the strongest parts of the DataGroomr webinar demo was how quickly teams can understand what’s actually happening in their data.

By connecting to Salesforce through standard APIs, DataGroomr analyzes selected objects like Accounts, Contacts, and Leads, and presents:

- Duplicate counts by object

- How those duplicates trend over time

- Field completeness across records

- Formatting consistency for common fields

- Optional verification results for emails, phone numbers, and addresses

For many teams, this replaces assumptions with clarity.

Different duplicates require different approaches

Some duplicates are straightforward. Exact matches. Obvious overlaps.

Others are more subtle. Slight naming differences. Alternate emails. Records created through different channels.

DataGroomr supports both traditional matching rules and machine learning models, so teams can address both ends of that spectrum. Multiple models can be used together, allowing organizations to identify obvious duplicates and more nuanced cases without forcing everything into a single rule set.

Duplicate groups are reviewed with confidence scores and side-by-side comparisons so users can see context before deciding how to proceed.

Cleaning data without losing important history

Merging records often raises concerns about losing information. The demo focused heavily on control and preservation.

Merge behavior is governed by rules that:

- Determine which record remains

- Pull values from other records to retain important details

- Exclude records when there’s a valid reason to keep them separate

If a merge needs to be reversed, options exist depending on retention requirements. The goal isn’t speed at the expense of trust. It’s consistency with safeguards.

Many of these rules can now be created by describing the intent rather than building logic from scratch, which reduces admin overhead without removing oversight.

Preventing duplicates before they enter Salesforce

Ongoing data quality depends on prevention as much as cleanup.

Scheduled deduplication jobs allow teams to review and merge records regularly rather than waiting for issues to pile up. This keeps data healthier over time without requiring constant manual effort.

Imports are another common source of duplicates. Instead of loading data and correcting it later, DataGroomr compares incoming records against existing Salesforce data first. Duplicates can be flagged, ignored, or used to update existing records rather than creating new ones.

This is especially useful for event lists, partner data, and recurring uploads.

Supporting Salesforce as it continues to evolve

Salesforce continues to add capability. Data quality ensures those capabilities have solid footing.

As organizations explore AI and automation, understanding the state of their data becomes a prerequisite, not an afterthought. Not because Salesforce demands it, but because results depend on it.

If you want help assessing where your org stands or thinking through how to approach data quality without disrupting the business, we’re always open to the conversation.

Find out where you stand on AI readiness and what to do next. Talk to Hunley today.